Difference between revisions of "ENZO"

| Line 26: | Line 26: | ||

Callpath profiling gives us an idea where most of the costly MPI communications are taking place. | Callpath profiling gives us an idea where most of the costly MPI communications are taking place. | ||

| + | |||

| + | [[Image:EnzoCallpath.png]] | ||

MPI Barriers take place in EvolveLevel(). And MPI_Recv takes place in grid::CommunicationSendRegions(). | MPI Barriers take place in EvolveLevel(). And MPI_Recv takes place in grid::CommunicationSendRegions(). | ||

Revision as of 23:03, 2 June 2009

ENZO Performance Study Summary

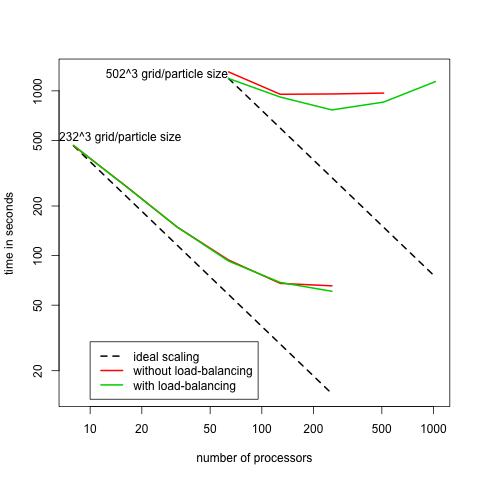

On this page will show the performance result for ENZO from the svn repository. We did this in par to see the effect of load balancing (not enabled in version 1.5) on scaling performance. The previous performance results for ENZO version 1 are at here.

Enzo Version 1.5

Following the release of Enzo 1.5 in November 08 we have done some follow up performance studies. Our initial findings are similar to what we found for version 1. For example see this chart showing the scaling behavior of Enzo 1.5 on Kraken:

Scaling behavior was very similar on Ranger.

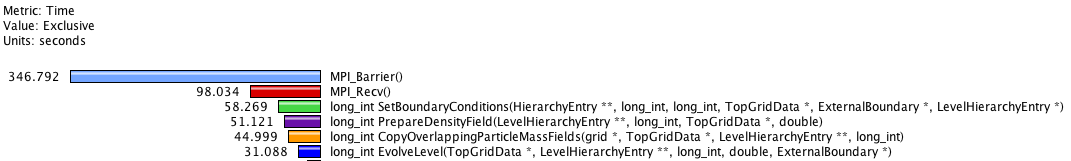

This poor scaling behavior could be anticipated by looking at the runtime breakdown (mean of 64 processors on Ranger):

with this much time spent in MPI communication increasing the number of processors is unlikely to result in much faster simulations. Looking more closely at MPI_Recv and MPI_Barrier we see that on average 5.2ms is spent per call in MPI_Recv and 40.4ms for MPI_Barrier. This is much longer than can be explained by communication latency on Ranger's infiniband interconnect. Mostly likely then EZNO is experiencing a load imbalance causing some processors to wait for others to enter the Barrier or send via MPI_Send.

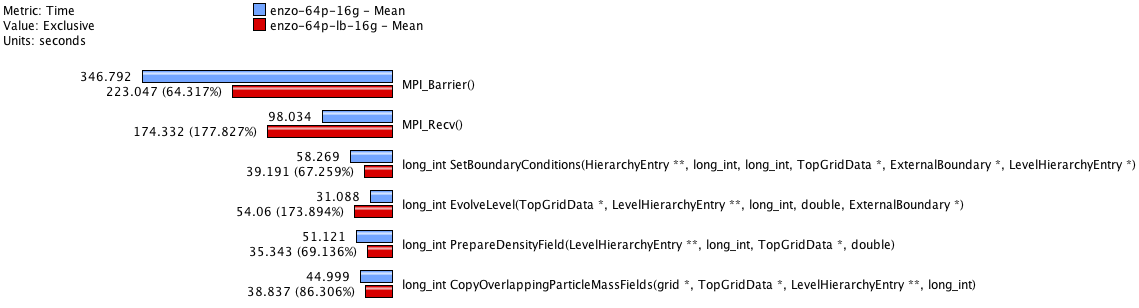

Next we looked at how enabling load balancing effect performance. This a runtime comparison between non-load balanced vs. load balanced simulation.

Time spent MPI_Barrier decrease but is mostly offset by the increase in time spent in MPI_Recv.

Callpath profiling gives us an idea where most of the costly MPI communications are taking place.

MPI Barriers take place in EvolveLevel(). And MPI_Recv takes place in grid::CommunicationSendRegions().